Connecting Local to Global: Inside Mistral AI's Worldwide Hackathon

Recap of the Multi-City, High-Stake Operation from the Judge’s Seat

Mistral AI Worldwide Hackathon — New York Edition

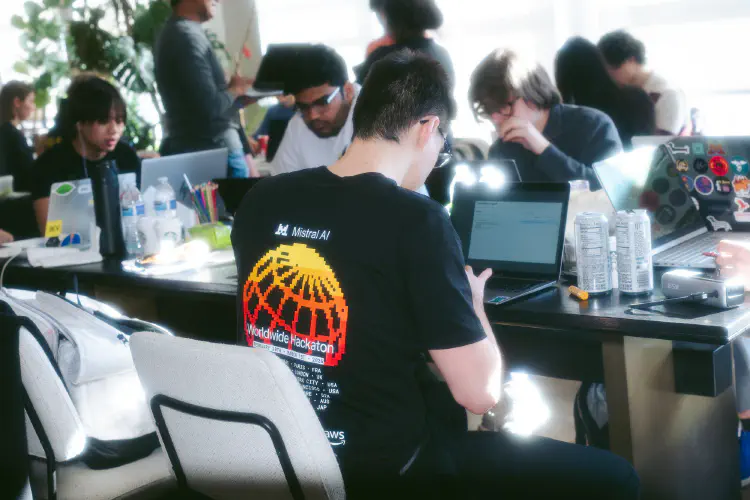

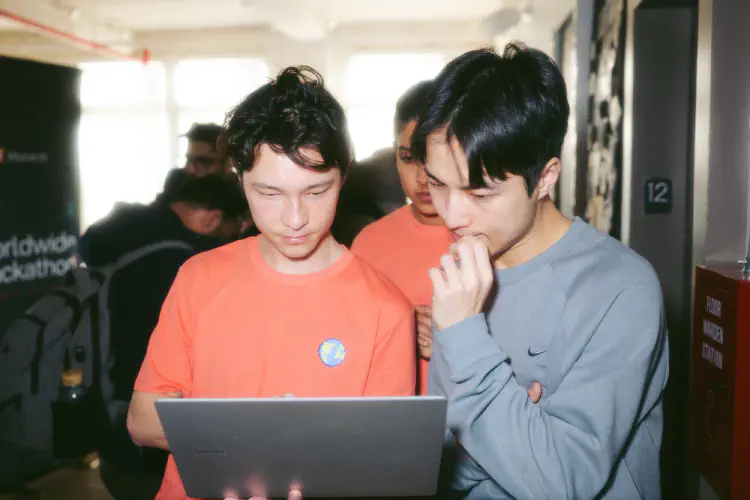

Mistral AI Worldwide Hackathon — New York EditionAt 9 AM on February 28th, Verci Flatiron in Manhattan was already buzzing. Laptops open, monitors set up, breakfast croissants on the tables — a nod to the Parisian company behind it all. Across the room, hundreds of builders were settling in for what would become a 36-hour overnight sprint. Across the world, six other cities were doing the same thing.

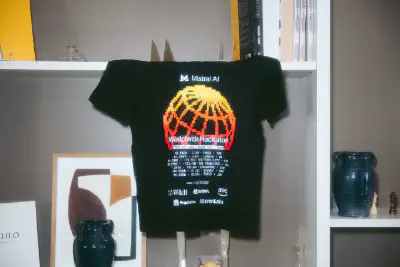

This was Mistral AI’s first-ever Worldwide Hackathon — their largest hackathon to date — running simultaneously across Paris, London, New York, San Francisco, Tokyo, Singapore, and Sydney. Over 7,000 people applied. 1,000 were selected. And I was there as a judge.

The Event at Scale

The numbers alone tell a story:

- Seven cities

- 7,000+ applications from a highly competitive global pool

- $200K+ in total prizes

- A grand prize of $10,000 cash plus $15,000 in Mistral credits plus a hiring opportunity at Mistral AI — with local winners advancing to a global final on March 9th via YouTube livestream

But the numbers only capture part of it.

What made this event structurally different from any hackathon was the simultaneous global format. All seven cities kicked off within the same window, built against the same clock, and competed for the same global grand prize. Local winners advanced to a single global final on March 9th. The competition was layered — you were building against the room, and the room was building against the world.

“The breakfast you just had was ‘France’…and every meal will represent each hosting city.” The cultural details! For two days, we served French croissants for breakfast, Japanese sushi for dinner, and so on. The real-time awareness that builders in Tokyo were wrapping up while NYC was just hitting its stride at midnight created a sense of scale that I had never experienced at a hackathon before. There was something electric about knowing that at any given moment during the 36-hour sprint, someone on the other side of the planet was hacking on the same models, under the same rules, toward the same deadline.

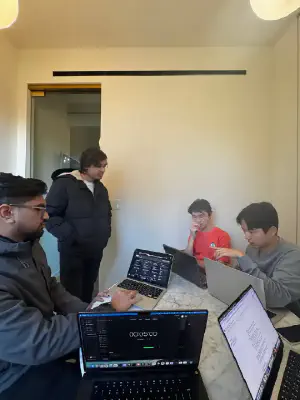

NYC’s venue was packed. Teams of one to four people, working through the night, building with Mistral’s latest Mistral 3 models. The energy shifted across the 36 hours — from excited planning, to the quiet intensity of the overnight build, to the focused urgency of final demos.

Why Mistral — The Technology Behind the Build

Spending two days embedded with Mistral and NVIDIA teams from developer relations and solution architecture gave me a perspective that most coverage of this company misses.

Mistral’s CEO Arthur Mensch has been explicit about their strategic bet: “The fight for AI supremacy is between open and closed systems, not geography.” That philosophy shaped every aspect of this hackathon. Builders owned their stack from the first line of code — fully permissive, unrestricted commercial use. No API lock-in. No usage caps.

The flagship, Mistral Large 3, is a Mixture-of-Experts architecture:

- with 675 billion total parameters but only 41 billion active at inference, enabling frontier-level capability at a fraction of the compute cost

- handles a 256K token context window with native multimodal support across text and images

- achieves a 10x performance gain over the prior generation (on NVIDIA’s GB200 NVL72)

But what made the hackathon technically interesting was the small model story. Ministral 3 — a family of nine dense models across 3B, 8B, and 14B parameterscan run on devices with as little as 4GB of VRAM using 4-bit quantization. Standard laptops. No cloud. No internet required. For a hackathon, this means teams could prototype locally, iterate fast, and deploy without infrastructure dependencies.

In conversations with the Mistral team, a consistent theme emerged. They were not positioning Mistral as a benchmark competitor to OpenAI or Google. They were positioning it as infrastructure for builders who want sovereignty over their AI stack. Howard (Head of Communications) put it directly: “We are open source, because we want to empower the builders, giving you the technology IN YOUR HANDS.” Mensch himself frames this as a safer bet for any country building AI infrastructure locally. It accommodates cultural nuances, deploys on local hardware, and avoids dependence on centralized systems.

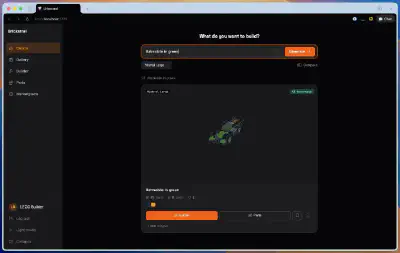

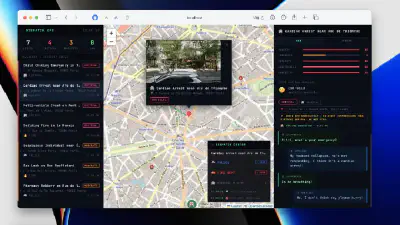

For the builders in the room, this was not theoretical. They were running models on their own machines, modifying architectures, and shipping demos that would have required API billing and vendor approval under a closed-model paradigm. That difference shaped the kinds of projects that got built:

Judging and Mentoring

What I found most rewarding was the dual role. I was there to evaluate, but the extended format allowed me to engage teams across multiple build stages.

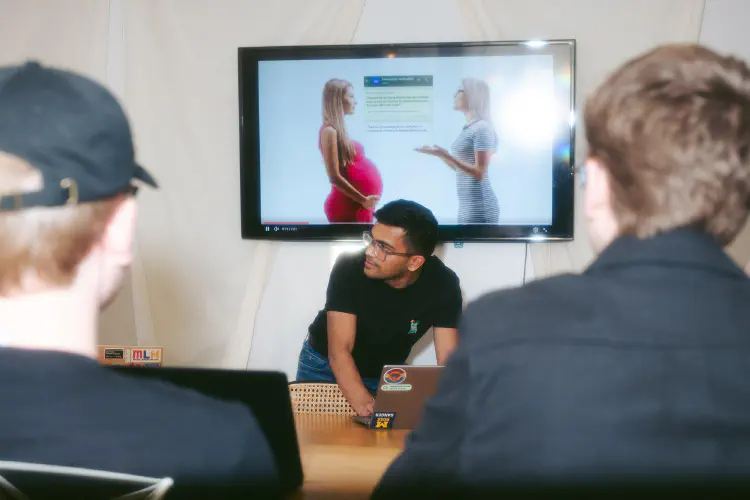

The mentoring conversations followed a pattern: teams come up and show me technically impressive demos, and I would push them on the problem space:

“What user problem does this solve? Does this add value to how users currently get the job done?”

“How do you ensure safety? Have you been considerate about values including privacy, public good, and/or inclusion?”

These were not rhetorical questions. The teams that actively reflected on them — that paused, rethought their approach, and came back with sharper framing — were the ones that outperformed. There were solutions that doubled down on technical sophistication alone (and I was blown away), but none of them make it to the top.

The People

A hackathon of this scale becomes a crossroads. The judging panel alone spanned across all seven cities. In the NYC room, I enjoyed the extended conversations with Mistral’s solution engineers, listening to NVIDIA’s technical marketing teams as they navigated the participants’ proposal using NVFP4 on NVIDIA Blackwell platform. We also had ML Researcher from Tilde Research, a frontier research lab that allowed us to look under the hood of the Ministral models with the mechanistic interpretability that ensured they were safe and reliable.

And then there was the scene across the event hall itself. People hovering their hands in the air wearing VR headsets to create games for the Supercell track. Teams iteratively testing voice interactions using ElevenLabs technology. I occasionally lifted my eyes from the screen to the steady hum of spinning fans and the electric pulse of racing minds. In the entire space filled with cognitive energy, neurons sparked and ideas collided as collective effort met the raw power of accelerated silicon.

Three Takeaways

Open-source matters — and not for the reason you might think.

Mistral’s bet on open-weight models is not primarily about beating benchmarks. It is about a fundamentally different relationship between builder and infrastructure. Under a closed-model paradigm, you rent capability through an API — the vendor controls pricing, availability, and what you can modify. Under Mistral’s approach, you own the weights. You can fine-tune, deploy on-premise, run offline on edge devices, and audit the model’s behavior. For enterprises navigating GDPR compliance, for governments building sovereign AI capacity, for startups that cannot afford vendor lock-in. For them, it is a strategic necessity. Mistral is proving that it is commercially viable at scale.

Community is global, but building is local.

Seven cities, one competition, one set of models. While most “global” hackathons are online events, this one put builders in the same physical space in seven cities simultaneously and made them compete across borders. NYC’s builder density is unique — the spontaneous collaborations, the in-person debugging sessions, the energy of a packed room. These cannot be replicated over Zoom. The global framing gives the event its ambition. The local rooms give it its soul. And the layered competition — win your city, then face the world — created stakes that a single-site hackathon simply cannot match.

The best AI work is cross-disciplinary.

As I noted in my judging experience, the winning projects were not the most technically complex. They were the most thoughtful about real problems and responsible deployment. Teams that combined strong engineering with clear problem framing and genuine consideration for safety and public good were the ones that stood out. This pattern has been consistent across every hackathon I have been involved.

What This Signals

Two things stayed with me after the event.

First, the open-source AI community does not just live on GitHub, disconnected in silos. This hackathon brought it to life — real people, in the same room, building together across seven time zones. The simultaneous global format created something that neither a single-city event nor an online hackathon can replicate: the feeling of being part of a worldwide movement with local roots. The potential for sustained, cross-border collaboration in the AI ecosystem is real, and events like this prove it.

Second, my own trajectory over the past month tells a story about what is possible when you show up and do the work. From organizing club-level events, to city-scale hackathons, to judging a global competition backed by some of the most significant companies in AI. The connective thread was not credentials or luck — it was Iterate, and the trust built through consistent execution.

The global finals are on March 9th. NYC’s local winners advance. I will be watching to see if the teams I mentored carry the momentum forward.

Mistral AI Worldwide Hackathon 2026 — New York Edition. Organized by Mistral AI, operated by Iterate. Backed by NVIDIA, AWS, Weights & Biases, Hugging Face, ElevenLabs, Jump Trading, Supercell, Tilde Research, White Circle, Giant Ventures, and RAISE.