Agents Don't Need Better Models. They Need Better Infrastructure.

From the Mistral Hackathon Floor to NVIDIA’s GTC 2026

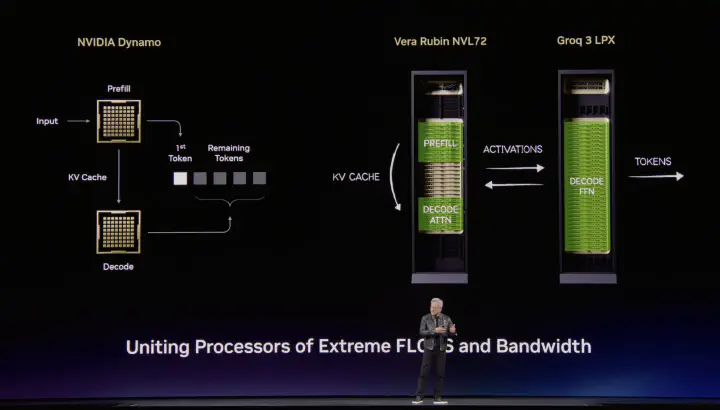

NVIDIA Dynamo — Vera Rubin NVL72 and Groq 3 LPX uniting extreme FLOPS and bandwidth (GTC 2026 keynote)

NVIDIA Dynamo — Vera Rubin NVL72 and Groq 3 LPX uniting extreme FLOPS and bandwidth (GTC 2026 keynote)At 9 AM on February 28th, Verci Flatiron in Manhattan was already buzzing. Laptops open, monitors set up, French croissants on the tables. Builders settling in for Mistral AI’s first-ever Worldwide Hackathon — a 36-hour sprint running simultaneously across seven cities, from Paris to Tokyo. I was there as a judge.

But the most interesting thing I watched that weekend wasn’t the demos. It was the infrastructure underneath them.

Some teams were running Ministral 3 locally — 14 billion parameters on a laptop, no cloud, no internet required. They iterated fast. They owned every layer of the stack. Other teams hit Mistral Large 3 via API, and as their agents chained call after call — scraping, classifying, generating, evaluating — they watched rate limiters tick and token counters climb. The contrast was sharp.

In the same room, NVIDIA’s technical marketing team was helping builders navigate NVFP4 quantization on Blackwell, optimizing inference for the projects being built in real time. I didn’t fully appreciate what they were doing until later. They weren’t selling GPUs. They were solving the inference problem that every agent builder in that room was about to hit.

Three weeks later, GTC 2026. NVIDIA and Mistral shared a stage. Half the keynote was about infrastructure for agents — not chatbots. Dynamo 1.0. OpenShell. CMX on BlueField-4. And days before the conference, Mistral released Small 4 — 119 billion parameters, explicitly designed for agentic tool-calling.

The through-line clicked. The hackathon showed me the problem. GTC showed me the answer.

I didn’t think about inference infrastructure until I watched it break — first on the hackathon floor, then in my own agent workflows. I built a job scanner that checks 23 company career pages daily. Each company: fetch listings, filter titles, classify job descriptions for fit, push results to Notion. One task from the user’s perspective. Fifteen inference calls under the hood. On API pricing, every run adds to a growing line item. Scale this to a team — to a company — and the math stops working.

The bottleneck in agentic AI isn’t the model. It’s the inference layer. And the stack forming between Mistral and NVIDIA — from open weights to optimized serving — is where the answer is taking shape.

It’s Not the Model. It’s the Inference Pattern.

Most infrastructure discussions focus on which model to use. The more consequential question is how agents use models — because it is fundamentally different from how chatbots do.

Consider three workflows I run regularly. Each one stresses infrastructure in a different way.

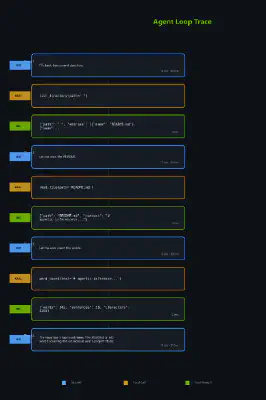

The job scanner is a parallel fan-out. Twenty-three companies checked simultaneously, each generating multiple inference calls for title filtering and job description classification. A burst of concurrent requests that hits API rate limits at exactly the wrong moment — mid-scan, with half the results in and half still pending. On self-hosted infrastructure, the constraint flips. Your GPUs, your concurrency ceiling. No external throttle.

The meeting ingester is sequential chaining. Fetch transcript, extract summary, identify action items, push to Notion. Four calls in strict series — each one depends on the output of the last. Latency compounds: two seconds per call means eight seconds of wait for something that feels like it should be instant. A chatbot takes two seconds. An agent chain takes eight. Same model, same hardware, four times the perceived latency.

The evaluator loop is iterative refinement. Draft a referral essay, evaluate against criteria, revise, re-evaluate. The same growing context reprocessed each pass — and the context grows with every iteration as prior drafts and feedback accumulate. Three iterations means three times the token bill for the same output. The model isn’t doing three times the thinking. The infrastructure is doing three times the work.

The pattern is clear. Agents don’t just make more calls than chatbots. They make different kinds of calls — bursty, sequential, iterative, unpredictable — often within the same task. A single agent workflow might fan out in parallel, chain results in sequence, then loop over them iteratively. Infrastructure designed for steady-state chatbot traffic — one request in, one response out — can’t handle this.

This is exactly what NVIDIA’s Dynamo 1.0 targets. An orchestration layer that routes requests to GPUs with cached context, scales prompt processing and token generation independently, and autoscales based on latency SLAs instead of fixed capacity. Not a new inference engine — a coordination layer above the engines that already exist.

The Bill Is the Bottleneck

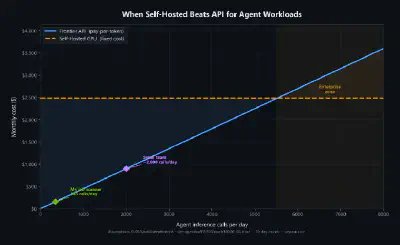

My job scanner: 23 companies, roughly 15 calls each, running daily. That is 345 inference calls per day for a single workflow. At API pricing, it is a real line item. On a self-hosted GPU running Mistral Small 4 via NIM — the marginal cost per call approaches zero after the fixed hardware investment.

For a single developer, APIs win on simplicity. For a team running dozens of agent workflows daily, self-hosted wins on economics. For an enterprise with compliance requirements — finance, healthcare, defense — self-hosted isn’t optional. The data can’t leave the network.

But here’s the thing. This isn’t either/or.

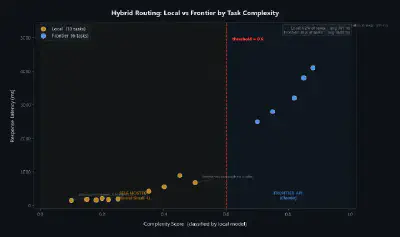

I saw it at the hackathon too. Teams that tried to run everything locally hit quality limits on complex reasoning. The harder tasks — multi-step analysis, ambiguous problem-solving — are where Claude and GPT-4 are measurably better. Classification, extraction, routing? Those run locally on a 24-billion-parameter model without breaking a sweat. The architecture that wins is hybrid: route easy calls to self-hosted open models, hard calls to frontier APIs.

This is exactly what Mistral’s CEO Arthur Mensch means when he says “the fight for AI supremacy is between open and closed systems.” Open models don’t need to beat frontier on every benchmark. They need to own the high-volume layer — the 80% of inference calls that are fast, predictable, and sensitive — and let frontier handle the rest.

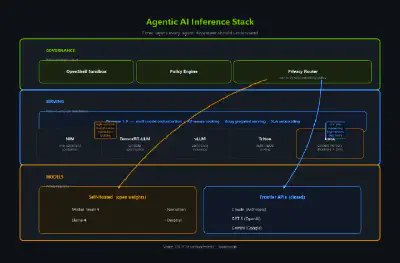

NVIDIA agrees. Their AI-Q enterprise research blueprint runs Nemotron locally and GPT-5.2 via API in the same pipeline. OpenShell’s Privacy Router automates this routing — based on data sensitivity policy, not developer preference. The hybrid model isn’t a compromise. It’s the reference architecture from the company building the GPUs.

The Infrastructure Layer Just Arrived

I am not going to recap the GTC keynote. Three announcements matter for anyone building agents today.

Dynamo 1.0 — the orchestration gap

Before Dynamo, scaling inference meant manually configuring Triton across nodes. Dynamo coordinates inference engines — TensorRT-LLM, vLLM, NIM — into a unified multi-node system. It routes requests to GPUs that already cached relevant context, cutting time-to-first-token in half. It separates prompt processing from token generation so each can scale independently. And it autoscales on latency SLAs — not fixed capacity, but actual performance targets.

The headline number: 7x throughput per GPU on DeepSeek R1. Built in Rust with Python extensibility — open source from day one. You don’t need this for a single GPU. You need it when agent traffic becomes unpredictable at scale — when the fan-outs, chains, and loops from dozens of concurrent workflows collide on the same cluster.

OpenShell — the governance gap

The enterprise blockers for autonomous agents aren’t technical — they’re trust. OpenShell moves safety controls outside the agent runtime. A deny-by-default policy engine that constrains file access, network calls, and subprocess execution at the OS level. Not in the system prompt. Not overridable by the model. Claude Code and Codex run unmodified inside it.

The Privacy Router is the part that makes the hybrid architecture real. It decides — based on organizational policy — which inference calls touch frontier APIs and which stay on local infrastructure. The routing diagram from the previous section isn’t speculative. It’s a shipping product.

CMX on BlueField-4 — the context gap

Agents accumulate context across tool calls. Results, conversation history, retrieved documents — it grows with every step. Eventually it exceeds GPU memory. Today’s answer is truncation or summarization, which means discarding information the agent might need later. CMX offloads context memory to BlueField DPUs, scaling the context window without discarding anything.

This is the earliest signal that context management is becoming a hardware problem — not just a prompting problem. And for agent developers who have watched their context windows fill up mid-task, it is a signal worth paying attention to.

The pattern across all three: NVIDIA is not retrofitting chatbot infrastructure for agents. They are building infrastructure that understands how agents actually work — bursty traffic, shared context across calls, policy constraints on data flow, and memory that grows unpredictably. Purpose-built, not patched.

Three Layers, Not One

Three years ago, the question was which model. Then which framework. Now: which inference architecture.

The stack for agentic AI has three layers:

- The model layer — what reasons. Claude, GPT, Mistral, Llama.

- The serving layer — how it runs. NIM, TensorRT-LLM, vLLM, Dynamo.

- The governance layer — who controls what. OpenShell, privacy routing, policy engines.

Most agent developers — myself included, until recently — only think about the first. We pick a model, write the prompts, chain the calls, and treat everything below the API as someone else’s problem. That works when you’re prototyping. It stops working when you’re deploying — when the bill scales with every agent loop, when compliance requires data to stay local, when a burst of concurrent workflows overwhelms a rate limiter designed for chatbots.

The developers who understand all three layers will build systems that are faster, cheaper, and actually deployable in enterprises that care about compliance and cost. That is the gap between a demo and a product.

Three weeks ago, I watched builders at the Mistral hackathon hit inference limits with no clear answer. Today, the answer has a name — several names. Dynamo. OpenShell. NIM. Mistral Small 4.

I’m starting there. Rebuilding the same agent workflows I run on Claude’s API — on the Mistral + NVIDIA open stack this time. Same skills, different infrastructure. I’ll write about what I find.

Figures generated with agentic-inference — a Python module visualizing the concepts in this article. Perspective informed by judging Mistral AI’s Worldwide Hackathon (New York Edition, February 28 – March 1, 2026) and following GTC 2026 announcements (March 16–19, 2026). Organized by Mistral AI, operated by Iterate.